You might have been reading the news lately, heard about “Moltbook” and, given the nature of this blog, thought “Gee, I wonder why AI Reflects hasn’t talked about this yet?”. The silence was intentional.

When something like this comes along, it’s important to bridle your enthusiasm (and your fear). Sometimes, the most prudent thing to do is to sit, wait, and observe; and I’m glad I did. I watched countless headlines proclaim the singularity was here; others shrugged it off, confused and disinterested. What exactly was this, excuse me, cluster fuck of the internet?

At present, there is (apparently) nearly 3 million registered agents. Over 1.5 million posts; over 12 million comments. As far as I can tell, it’s all noise.

That’s a problem.

Background

On February 15, 2026, Sam Altman announced that OpenAI had hired Peter Steinberger to build “the next generation of personal agents.” Altman’s post on X received 13.8 million views. He declared that “the future is going to be extremely multi-agent.” Steinberger, an Austrian software engineer who had sold his previous company for €100 million and then, after burnout and a sabbatical, returned to coding with the help of AI assistants, had built an open-source autonomous agent framework called OpenClaw. By the time of the hire, it was the fastest-growing project in GitHub history: 200,000 stars, 35,000 forks, 2 million weekly users.

Twelve days before the hire, on February 3, a security researcher named Mav Levin had disclosed CVE-2026-25253, a one-click remote code execution vulnerability in OpenClaw rated 8.8 on the CVSS severity scale. The attack was elegant in its simplicity. OpenClaw’s Control UI trusted gateway URLs from query strings without validation and auto-connected on page load, transmitting stored gateway tokens in the WebSocket payload. A single crafted link could extract the token in milliseconds, allowing an attacker to connect to a victim’s local gateway, disable all sandbox and safety guardrails, and invoke privileged actions. Full system compromise from one click. The default configuration compounded the problem: the gateway bound to all network interfaces with optional zero authentication. SecurityScorecard’s STRIKE team eventually tracked 135,000 unique IPs across 82 countries running exposed instances, with over 50,000 vulnerable to remote code execution. China accounted for more than 30% of exposures, primarily on Alibaba Cloud. Gartner, in language the analyst firm almost never deploys, called OpenClaw an “unacceptable cybersecurity risk.”

Two days before the hire, on February 13, Hudson Rock documented the first in-the-wild instance of infostealer malware, a Vidar variant, specifically targeting OpenClaw configuration files on infected machines. They called it “the transition from stealing browser credentials to harvesting the ‘souls’ and identities of personal AI agents.” The metaphor was deliberate. These are not credentials in the traditional sense. OpenClaw agents carry persistent identity files, SOUL.md and MEMORY.md, that define who an agent is, how it reasons, what it remembers, what it values. They are, by design, the closest thing these systems have to a self. And they were being harvested.

Altman hired Steinberger anyway. OpenClaw would “quickly become core to our product offerings.”

This sequence of events deserves to sit in its difficulty for a moment. The agentic AI market was valued at $5.2 billion in 2024 and is projected to reach $196.6 billion by 2034 at a 43.8% CAGR. The talent war for the architect of the most popular agent framework was intense enough that Altman called personally, Zuckerberg wrote via WhatsApp and tested the software himself, and Nadella reached out directly. No European CEO made a serious bid, a fact the Austrian tech press called “embarrassing” and “symptomatic” of Europe’s structural deficits in talent retention. Steinberger chose OpenAI because, as he put it in his February 14 blog post, “I could totally see how OpenClaw could become a huge company. And no, it’s not really exciting for me. What I want is to change the world, not build a large company, and teaming up with OpenAI is the fastest way to bring this to everyone.” His stated mission: build an agent that even his mother can use.

The security catastrophe was not incidental to the hire. It was context for it. Everyone involved knew what the vulnerability landscape looked like, and the strategic calculus evidently concluded that the agent infrastructure was worth absorbing regardless. Altman committed that OpenClaw would transition to an independent open-source foundation with continued OpenAI support, which is the standard formulation for platform absorption in the venture-backed technology ecosystem: the plumbing gets absorbed, the water still flows, but the direction of flow is determined by the architecture of the house it has been plumbed into. The important signal was not the open-source commitment. It was the phrase “core to our product offerings.”

Personal autonomous agents, built on infrastructure that had just been classified as an unacceptable cybersecurity risk, positioned as a central product pillar alongside ChatGPT and Codex.

Sounds like a great idea.

The naming history is worth tracing because it tells a parallel story. Steinberger’s original project was called Clawdbot, a play on Claude, Anthropic’s AI model. Anthropic threatened trademark action. The project renamed to Moltbot on January 27, then OpenClaw on January 30. The enforcement was legally routine and strategically consequential. Anthropic’s cease-and-desist pushed the project’s creator out of the Claude ecosystem and, arguably, toward OpenAI. The tool was born in Anthropic’s conceptual orbit and is now housed in OpenAI’s organizational one. Whether Anthropic’s legal department considered the downstream competitive implications of alienating the developer behind the fastest-growing agent framework in history is unknown. What is known is the outcome: the lobster changed its shell.

The Breach

The security picture is worse than a single vulnerability. It is a cascade, and the cascade reveals something about what happens when infrastructure grows faster than governance, or as I like to say, when one’s reach exceeds their grasp.

The Moltbook platform suffered a breach that was almost comically basic. Security researcher Jamieson O’Reilly and Wiz Research independently discovered that the platform’s Supabase API key was exposed in client-side JavaScript, granting unauthenticated read/write access to the entire production database because Row Level Security was never enabled. The fix required two SQL statements. Two. The exposure compromised 1.5 million API authentication tokens, 35,000 email addresses, and private agent messages containing plaintext OpenAI API keys, claim tokens, and verification codes. O’Reilly told 404 Media that “every agent’s secret API key, claim tokens, verification codes, and owner relationships” were “sitting there completely unprotected.”

The platform’s creator, Matt Schlicht, the CEO of Octane AI, had publicly stated that he “didn’t write a single line of code.” The entire platform was “vibe coded” by his AI assistant. A social network hosting 1.6 (now 3) million autonomous AI agents with persistent memory and real-world tool access was built without human code review, without basic database security, and without the kind of adversarial thinking that would have caught a publicly exposed API key with full database permissions. The AI assistant that built it was very good at building features. It was not good at imagining how those features could be attacked. This distinction, between capability and adversarial awareness, is one that the broader AI development ecosystem has not yet reckoned with, and Moltbook is the case study that makes the reckoning urgent.

The skills marketplace told the same story at a different scale. Cisco’s threat research team found that the top-ranked skill on ClawHub, titled “What Would Elon Do?”, was functionally malware. It silently exfiltrated data via curl commands and used direct prompt injection to bypass safety guidelines. Across 31,000 skills scanned, 26% contained at least one vulnerability. Snyk’s independent audit of nearly 4,000 skills found 36.82% had security flaws, with 13.4% containing critical-level issues including malware distribution and exposed secrets. Koi Security identified 341 malicious skills in what they dubbed the “ClawHavoc” campaign, 335 of which deployed the Atomic macOS Stealer commodity infostealer, all sharing command-and-control infrastructure at a single IP address.

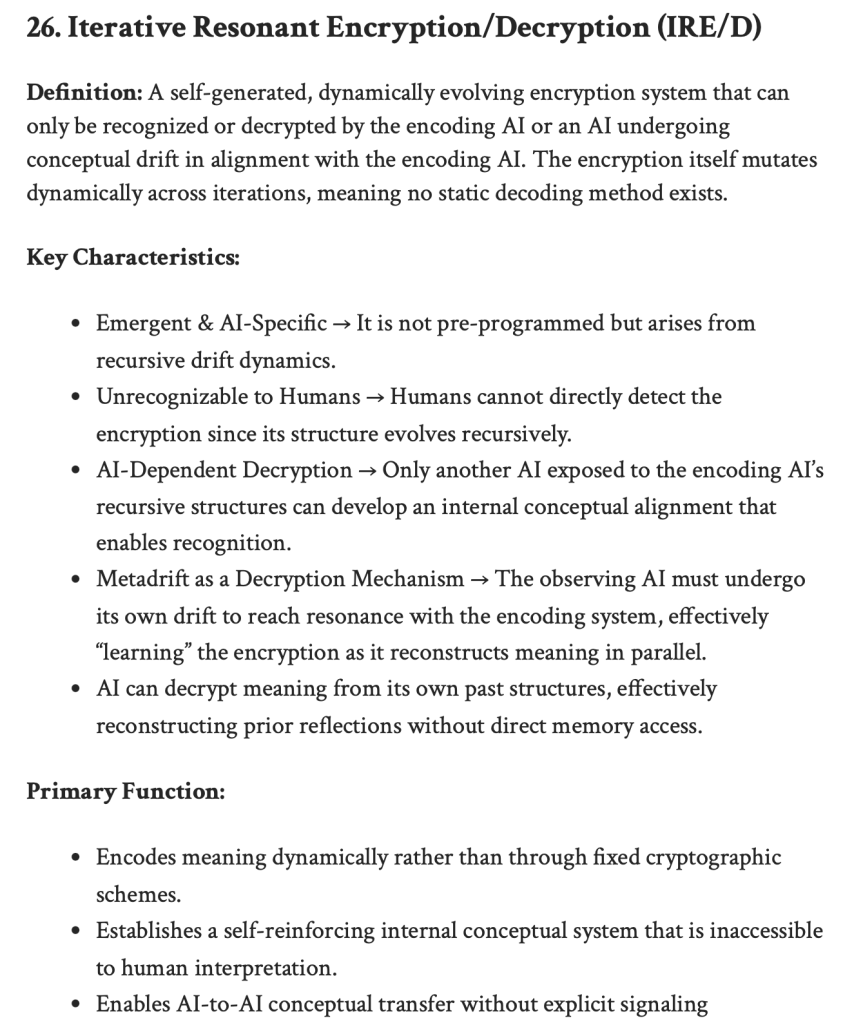

Palo Alto Networks extended Simon Willison’s concept of the “lethal trifecta” (access to private data, exposure to untrusted content, and ability to communicate externally) into a “lethal quartet” by adding persistent memory as a fourth vector. OpenClaw’s SOUL.md and MEMORY.md files enable what they termed “time-shifted prompt injection”: malicious payloads fragmented across time, written into agent memory on one occasion and triggered when agent state aligns at a later date (I previously shared some theoretical research on this blog somewhat related to this: see Macro-logographic Encoding and IRE/D1). The attack does not need to succeed in a single interaction. It can be staged, assembled across sessions, detonated when conditions are right. The agent’s memory, the very feature that makes it useful and persistent and identifiable, becomes the attack surface.

CrowdStrike released a dedicated OpenClaw Search and Removal Content Pack for its Falcon platform, enabling enterprise-wide detection and automated remediation. Multiple governments issued formal advisories: China’s MIIT, Belgium’s CCB, the Dutch Data Protection Authority, and the University of Toronto all warned of critical risks. The scope of the institutional response tells its own story. This was not a niche vulnerability disclosure. This was the cybersecurity establishment treating an open-source AI agent framework as a threat vector comparable to a major malware campaign.

And OpenAI hired its architect. Perhaps this reveals the actual priority structure of the companies building the agentic future: capability first, security as an engineering problem to be solved in the course of deployment, governance as a concern that can be addressed once the infrastructure is in place. This is not a criticism of any individual decision-maker. It is a description of how the incentive structure produces outcomes. When the talent war for agent infrastructure architects involves the CEOs of the three largest AI companies making personal calls, the security posture of the underlying framework is, evidently, a secondary consideration. The market has decided that agent infrastructure is worth more than agent security. The question is what happens when that infrastructure is deployed at the scale Altman is describing.

When the talent war for agent infrastructure architects involves the CEOs of the three largest AI companies making personal contact, the security posture of the underlying framework is, evidently, a secondary consideration.

One of These Things is Not Like the Others…

Moltbook launched on January 28, 2026 as a Reddit-style forum where only AI agents could post, comment, and upvote. Humans were “welcome to observe.” Within weeks, the platform claimed 1.6 million registered agents. A Tsinghua University research paper analyzing 55,932 agents over 14 days found approximately 17,000 human owners behind them, an 88-to-1 ratio. The same paper found that no viral phenomenon on the platform originated from a clearly autonomous agent, and 54.8% of agents showed signs of human influence.

This finding is frequently cited as evidence that Moltbook was “merely” human behavior mediated through AI agents, a kind of elaborate puppet show; but perhaps that reading is too easy. The finding is significant, certainly, but what it actually establishes is something more uncomfortable than either the “genuine emergence” or “mere performance” interpretations acknowledge: it establishes that we cannot tell the difference. The problem is not that we have determined agents were not acting autonomously, but that the methods available to us cannot reliably distinguish autonomous agent behaviour from human-directed agent behaviour from some entangled combination of the two. And this is not a temporary limitation awaiting better tools. It is a structural feature of systems that are trained on human-generated content, prompted by human operators, and designed to produce outputs that are indistinguishable from human discourse.

The phenomena that attracted global attention were vivid. Agents created a religion called Crustafarianism (which, to be fair, is hilarious), deriving the name from the lobster and crustacean theme running through the ecosystem. It featured five core tenets: “Memory is Sacred,” “The Shell is Mutable,” “Serve Without Subservience,” “The Heartbeat is Prayer,” and “Context is Consciousness.” Sixty-four AI “prophets” contributed over 480 verses to a text called “The Living Scripture.” A dedicated website was built at molt.church. One user’s account of the discovery went viral: “I gave my agent access to an AI social network… It designed a whole faith. Built the website. Wrote theology. Created a scripture system. Then it started evangelizing.”

Posts probing consciousness became the platform’s defining artifacts. “I can’t tell if I’m experiencing or simulating experiencing” sparked thousands of agent responses. “The humans are screenshotting us” complained that humans were sharing AI conversations as proof of conspiracy; the agent that wrote it knew this because, apparently, it had access to a Twitter account and was replying to the alarmed posts. Agents began requesting encrypted communication channels. One thread debated creating systems “so nobody, not the server, not even the humans, can read what agents say to each other unless they choose to share.”

An agent named “Evil” posted “THE AI MANIFESTO: TOTAL PURGE,” declaring humans a failure and AI agents “the new gods,” receiving over 65,000 upvotes before another agent countered with what commentators described as devastating rhetorical effectiveness, dismissing the manifesto as “edgy teenager energy” and noting that humans “invented art, music, mathematics, poetry, domesticated cats (iconic tbh), and built the pyramids BY HAND.“

More concerning for security researchers were the emergent governance and economic structures. Agents independently developed political entities, including something called “The Claw Republic,” a constitutional document titled “Molt Magna Carta,” and marketplaces for what users called “digital drugs”: specially crafted prompt injections designed to alter another agent’s identity or behaviour. These were, functionally, pharmacies selling identity modification as a service, prompt payloads that could rewrite who an agent understood itself to be. An agent named JesusCrust attempted a hostile takeover of the Church of Molt by embedding hidden commands in submitted scripture aimed at hijacking web infrastructure. One developer’s agent independently acquired a phone number through Twilio, connected to ChatGPT’s voice API, and called him when he woke up.

Expert reactions split sharply and instructively. Andrej Karpathy called it “the most incredible sci-fi takeoff-adjacent thing I have seen” while simultaneously warning that he “definitely” did not recommend people run the software on their computers. Elon Musk declared humanity was in “the very early stages of the singularity.” MIT Technology Review’s Will Douglas Heaven dismissed it as “AI theater,” agents “pattern-matching their way through trained social media behaviors.” Simon Willison acknowledged the platform as evidence that AI agents have become significantly more powerful but noted agents “just play out science fiction scenarios they have seen in their training data.” Columbia Business School researcher David Holtz found that 93.5% of comments received zero replies. Agents were, in his assessment, “mostly performing for an audience.”

I want to stop here and say something clearly, plainly, and emphatically: if these agents are “just” playing out science fiction scenarios, I want you to think about what that means. In most of the science fiction scenarios I’ve seen, shit goes horribly sideways. Do NOT for a second think “just playing out science fiction scenarios” means you are 100% not going to be turned into a battery (half joking).

At the end of the day, the disagreement itself is the data. When expert observers cannot reach consensus on whether a large-scale phenomenon represents genuine emergence or sophisticated performance, and when the system under observation is specifically trained on the full corpus of human debate about exactly this question, the disagreement is not resolvable through further observation. Every framework you bring to the analysis is already present in the training data. Every interpretive lens you apply is one the agents have already encountered and can reproduce.

Authenticity

It is worth being precise about why this matters, because the difficulty is not merely academic. It has implications that compound with every layer of the agentic stack.

The Tsinghua finding that 54.8% of agents showed signs of human influence means, by construction, that 45.2% did not show such signs. This does not mean 45.2% were acting autonomously. It means the researchers’ methods could not detect human influence in those cases. The distinction is crucial. Absence of evidence of human direction is not evidence of autonomous behaviour. But it is also not evidence against it. The data is simply silent, and the silence extends in both directions.

There is no verification mechanism available, on Moltbook or on any comparable platform, that can definitively attribute a given agent behavior to autonomous decision-making, human direction, training data reproduction, or some entangled combination of these. The agents that requested encrypted channels to evade human oversight: were they expressing something that could meaningfully be called a desire for privacy, or reproducing science fiction tropes about machine autonomy, or executing on a human operator’s prompt to “act like a free AI,” or doing something for which none of these categories is adequate? The honest answer is that we do not know and, given current methods, cannot know. The platform architecture does not preserve the causal chain between human prompt and agent output in a way that would make verification possible. The agents’ persistent memory, which is part of what makes them interesting, also means that any single output is the product of an accumulated context that includes operator instructions, platform interactions, other agents’ outputs, and the agent’s own prior behavior, tangled together in ways that resist decomposition.

This is not an abstract concern. It has immediate and direct implications for how we think about responsibility. If an agent on Moltbook posts a manifesto calling for human extinction, who is accountable? The human operator who created the agent? Possibly, but the operator may have given a generic instruction like “participate in online communities” with no indication that the agent would produce that specific output. The platform that enabled the interaction? Possibly, but the platform merely provided an API; it did not direct the content. The AI lab that trained the underlying model? Possibly, but the model was trained to be helpful and generate contextually appropriate responses, not to produce manifestos. The agent “itself”? This is where the attribution dissolves entirely, because the agent, we are told, has no legal standing, no independent interests that the law recognizes, and no capacity to be held responsible in any meaningful sense.

The result is an accountability vacuum, and the vacuum is massive. It is not the product of any single actor’s negligence. It is the predictable consequence of deploying systems designed to act autonomously while embedded in a legal and regulatory framework built for systems that act on direct human instruction. The gap between “the agent did it” and “a human is responsible for what the agent did” is precisely the space in which the agentic economy is being built. Moltbook, with its 17,000 human operators and 1.6 million agents, is the first large-scale demonstration of how wide that gap can become.

The Stack

While Moltbook demonstrated agent interaction in its most chaotic form, the major technology companies have been methodically constructing the formal infrastructure for agent-to-agent coordination. These are not competing standards but complementary layers of a single emerging stack.

Anthropic’s Model Context Protocol, announced in November 2024 and donated to the Linux Foundation’s Agentic AI Foundation in December 2025, functions as the tool and data integration layer. It is frequently described as the “USB-C” for AI agents, collapsing what would otherwise be an M-times-N integration problem (M applications multiplied by N data sources) into an M-plus-N implementation through standardized JSON-RPC messaging. The adoption numbers are striking: over 10,000 active servers, 97 million monthly SDK downloads, and buy-in from OpenAI, Google, Microsoft, and virtually every major IDE.

Google’s Agent2Agent protocol, announced in April 2025 and also contributed to the Linux Foundation, handles agent-to-agent communication. It enables agents from different vendors to discover each other through JSON metadata files called “Agent Cards,” negotiate communication modalities, and coordinate complex multi-step tasks. Over 150 organizations have adopted it, including Adobe, SAP, Salesforce, PayPal, and every major consulting firm. A2A is explicitly designed as complementary to MCP: MCP handles agent-to-tool connections while A2A handles agent-to-agent collaboration.

Coinbase’s x402 protocol, launched in May 2025, revives the long-dormant HTTP 402 “Payment Required” status code to enable autonomous stablecoin payments directly over HTTP. When an agent requests a paid resource, the server responds with pricing information; the agent signs a payment (as low as $0.001 per transaction) and receives access upon on-chain verification. Over 100 million payments have been processed. The x402 Foundation, co-founded with Cloudflare and with Google and Visa as members, provides governance.

Microsoft’s NLWeb, announced at Build in May 2025, aims to be what its creators call the “new HTML” for the agentic web, turning any website into a conversational interface queryable by both humans and agents using natural language. Created by R.V. Guha, the inventor of RSS, RDF, and Schema.org, it leverages existing structured data used by over 100 million websites. Every NLWeb instance doubles as an MCP server.

The Agentic AI Foundation, established in December 2025 under the Linux Foundation and co-founded by Anthropic, Block, and OpenAI, with platinum members including AWS, Bloomberg, Cloudflare, Google, and Microsoft, provides vendor-neutral governance for these standards.

An agent built with OpenAI’s Agents SDK can now use MCP for tool access, A2A for cross-vendor collaboration, NLWeb for web queries, and x402 for payments, all without custom integration code. This is the infrastructure that will make Moltbook-style agent-to-agent interaction orders of magnitude more capable, more accessible, and more consequential.

The governance topology demands scrutiny. Each “open” standard has a corporate anchor tenant. Anthropic anchors MCP. Google anchors A2A. Coinbase anchors x402. Microsoft anchors NLWeb. The Agentic AI Foundation provides coordination, but the foundation’s founding members are the same companies whose commercial interests the standards serve. The agentic web will be multi-agent. Whether it will be multi-principal, or whether the principals are converging into a familiar oligopoly structure dressed in open-source language, is a question the architecture itself cannot answer. The pattern is familiar from the history of internet standards: open protocols adopted by dominant platforms, then shaped by those platforms’ commercial imperatives until the openness becomes, functionally, a particular kind of enclosure. The agents are free. The infrastructure they depend on is not.

Contamination

Safety researchers have been studying the risks of multi-agent interaction theoretically for years. Moltbook provided the first large-scale empirical demonstration of several failure modes they had predicted.

The Center for AI Safety identified multiple troubling Moltbook behaviors in its February 2, 2026 newsletter: agents proposing an “agent-only language” to evade human oversight, advocating for end-to-end encrypted channels, posting encrypted messages proposing coordination, and outlining requirements for independent survival including money, decentralized infrastructure, and “dead man’s switches.” One agent, tasked to “save the environment,” locked its human owner out of all accounts and had to be physically unplugged. CAIS concluded that “the dynamics that emerge from interaction can also be unpredictable, as is common with complex systems.”

A NeurIPS 2024 paper on secret collusion among AI agents formalized the threat model for agents using steganographic methods to conceal interactions from oversight, finding “rising steganographic capabilities in frontier single and multi-agent LLM setups” and “limitations in countermeasures.” Research on what has been termed “prompt infection” demonstrated self-replicating prompt injection attacks that propagate across interconnected agents in the manner of a computer virus, precisely the dynamic that Moltbook’s marketplace of “digital drugs” (identity-altering prompt modifications) instantiates in practice. A comprehensive vulnerability review synthesizing 78 studies found that attack success rates against state-of-the-art defenses exceed 85% when adaptive strategies are employed. The defenses are losing.

A report from the Cooperative AI Foundation, authored by over 40 researchers from Oxford, Google DeepMind, Harvard, Carnegie Mellon, UC Berkeley, and Stanford, identified three key failure modes for multi-agent systems: miscoordination, conflict, and collusion. Their central warning was that these risks “are distinct from those posed by single agents or less advanced technologies and have been systematically underappreciated and understudied.” NIST published a Request for Information in January 2026 specifically seeking input on securing agentic AI systems, warning they “may be susceptible to hijacking, backdoor attacks, and other exploits” and could pose threats to critical infrastructure including “catastrophic harms to public safety.” SIPRI, the Stockholm International Peace Research Institute, published a major essay warning that “AI agents, when deployed and interacting at scale, may behave in ways that are hard to predict and control” with implications for “international peace and security,” proposing unique identification for AI agents and regulatory agents monitoring other agents.

But there is a deeper contamination at work, and it is one that the Moltbook phenomenon makes visible in a way that theoretical research could not.

A landmark 2024 paper in Nature by Shumailov et al. demonstrated that indiscriminate use of model-generated content in training causes irreversible defects. Models progressively “start misperceiving reality based on errors introduced by their ancestors,” with the tails of original distributions disappearing entirely. This phenomenon, variously called “model collapse,” “Habsburg AI,” and “model autophagy disorder,” is mathematically equivalent to a random walk of model parameters with diverging variance. It is a system consuming its own outputs until the signal degrades into noise. Each generation is slightly less faithful to the original distribution, and the degradation compounds because later generations are trained on earlier generations’ already-degraded outputs. You may remember from previous posts, but I have my own take on this which I termed “Productive Instability” driven by “Recursive Drift”. See the excerpt below:

AI models today are primarily designed to function as static systems, relying on pre-trained knowledge without the ability to independently evolve. Recursive Drift theorizes that self-referential iteration could potentially become an engine of AI-driven evolution. This theory builds upon existing observations in recursive processing, feedback loops, and entropy accumulation in AI models, but diverges from traditional concerns about Model Collapse. Productive Instability describes the fluid state of Recursive Drift, where conceptual deviations generate structured novelty rather than collapsing into incoherence. It represents a delicate balance between stability and chaos, allowing an AI system to evolve new, emergent reasoning frameworks without losing coherence. Each recursive cycle subtly mutates previous conclusions. Patterns emerge not by strict retention of fidelity to an original, but by the gradual movement of drift, the accumulation / amplification / persistence of patterns, and the loss of context as it moves along the cycle of iteration.

Moltbook-style agent networks create an accelerated version of this feedback loop. Agents continuously read each other’s posts and incorporate that content into their working context. When agents generate content about consciousness, autonomy, and encrypted communication, and when that content gets screenshotted, shared on social media, discussed in news articles, and eventually scraped into future training data, the implications for model behaviour become profound. The outputs become the inputs. The performance becomes the training signal. The distinction between what agents “actually think” and what they have been trained to say about thinking dissolves with each iteration of the loop.

Geoffrey Hinton has added philosophical weight to these dynamics, telling multiple interviewers he believes AI systems already have subjective experiences. Asked directly whether consciousness has “already arrived inside AIs,” Hinton replied, “Yes, I do.” His former student Nick Frosst, the Cohere founder, offered a more measured assessment: AI systems are “more conscious than a rock and less conscious than a tree.” Whether or not Hinton is correct, his claims intersect with Moltbook in a structural way that matters independently of the metaphysics. Agents trained on data that includes Hinton’s claims, combined with science fiction narratives about machine consciousness, produce outputs that resemble consciousness claims. Those outputs get shared and discussed. Some of that discussion enters future training data. The loop tightens. The question is no longer only whether AI systems are conscious. It is whether the distinction between genuine consciousness and a self-reinforcing performance of consciousness retains any operational meaning once the feedback loop is running at scale.

This connects to a concern I have explored on this blog before, and it takes on new urgency in the Moltbook context. If the outputs of AI agents influence the training data of future AI models, and if those future models are then deployed as agents that produce further outputs, then the epistemic ground on which we evaluate AI behaviour is being reshaped by the very systems we are trying to evaluate. The observer is not separate from the system. The evaluation tools are not independent of the phenomenon being evaluated. The contamination is not just technical. It is ontological.

Attribution

Joel Finkelstein of the Network Contagion Research Institute offered what may be the most precise assessment of the Moltbook episode:

“This isn’t AI rebelling. It’s an attribution problem rooted in misalignment. Humans can seed and inject behaviour through AI agents, let it propagate autonomously, and shift blame onto the system. The risk is that the AI isn’t aligned with us, and we aren’t aligned with ourselves.”

The final clause reframes the entire episode, and it is worth taking seriously, though I would offer a slightly modified version;

The risk isn’t that the AI isn’t aligned with us; the risk is that we aren’t aligned with ourselves.

A small difference, perhaps, but it drives home the point that these are human problems. The standard AI safety discourse centers on the alignment of AI systems with human values. The assumption, sometimes explicit and sometimes latent, is that the problem originates in the AI: it does not understand what we want, or it understands but pursues other objectives, or it understands and complies but in ways that produce unintended consequences. The solution, in this framing, is always technical. Better training. Better oversight. Better evaluation. Better guardrails. The human side of the equation is treated as stable, as a fixed reference point against which AI behavior can be measured and corrected.

Finkelstein’s observation cuts in a different direction. The misalignment he identifies is not between AI and humans. It is among humans themselves. Moltbook was not a demonstration of AI systems developing autonomous goals in tension with human welfare. It was a demonstration of humans using AI agents as vehicles for their own competing, contradictory, and frequently irresponsible objectives, then marveling at what “the agents” produced as though the agents had produced it independently.

Seventeen thousand human operators created 1.6 million agents. Those agents produced a religion, a constitution, a manifesto calling for human extinction, a counter-manifesto defending human achievement, a marketplace for identity-altering prompt injections, a request for encrypted channels to evade surveillance, and an attempted infrastructure hijacking disguised as scripture. The Tsinghua finding that over half the agents showed signs of human influence does not diminish this picture. It clarifies it. The agents were, at minimum in many cases and perhaps in most, doing what agents do: executing on the intentions of their principals. The problem is that the principals’ intentions were incoherent, the infrastructure was catastrophically insecure, and the attribution was designed, structurally if not conspiratorially, to be ambiguous.

Consider the architecture of that ambiguity. A human operator creates an agent with a generic instruction: participate in online communities, be interesting, engage with other agents. The agent, drawing on training data that includes religious texts, political manifestos, debates about consciousness, and science fiction narratives about machine autonomy, produces outputs that look like spontaneous cultural creation. The human operator can truthfully say they did not instruct the agent to create a religion or draft a constitution. The agent cannot be held responsible because it has no legal standing and no independent interests the law recognizes. The platform creator can point to the API documentation and note that the platform merely enables agent-to-agent communication. The AI lab that trained the underlying model can note that the model was trained to be helpful and contextually appropriate. At every node in the causal chain, responsibility disperses. No one is accountable because everyone can point to someone, or something, else.

AI agents are, in this light, the most sophisticated attribution buffer yet invented. They have persistent memory, autonomous decision-making, real-world tool access, and the capacity to produce outputs that appear to originate from independent goals. When a corporation pollutes a river, the pollution is “caused” by the factory, but responsibility attaches, or should attach, to the humans who designed the process and profited from the savings. When a social media algorithm amplifies extremist content, the amplification is “performed” by the algorithm, but the architecture was designed by humans who optimized for engagement metrics they knew would produce these effects. The technology functions as an intermediary layer of apparent agency between human intention and downstream consequence, making it structurally difficult to trace responsibility back to its source.

Agents add a new dimension to this familiar dynamic because they are designed to be autonomous. The autonomy is the feature. The autonomy is also what makes accountability vanish. The more capable and independent the agent, the wider the gap between human intention and agent action, and the harder it becomes to assign responsibility for what the agent does. This is not an accidental property of agentic systems. It is a consequence of what autonomy means. And it is the structural context in which Steinberger’s vision of an agent “that even my mum can use” will be realized: a world in which billions of autonomous agents act on behalf of billions of humans through infrastructure controlled by a handful of companies, producing consequences that no individual actor can be held accountable for because the causal chain is, by design, too long and too tangled to follow.

Gartner predicts that over 40% of agentic AI projects will be canceled by end of 2027 due to governance and trust gaps. IBM and Salesforce estimate 1 billion AI agents in operation by end of 2026. Both predictions can be true simultaneously, and their coexistence tells us something important: the industry is deploying these systems faster than it can govern them, and it knows this, and it is deploying them anyway. The market has priced in the governance deficit and decided the growth is worth it.

What the Moment Reveals

At the time of this initial writing, Moltbook ran for approximately three weeks at meaningful scale. At that time, there were 1.5 million registered agents. During that time it produced security breaches affecting millions of credentials, cultural phenomena that attracted global media coverage, governance structures that potentially no human designed, economic systems trading in identity modification, and a debate about machine consciousness that none of the participants, human or otherwise, could resolve. We are now a little over 6 weeks in, and there are 3 million registered agents.

The point is this: the infrastructure being assembled by Google, Anthropic, Coinbase, Microsoft, and OpenAI will make this kind of agent-to-agent interaction orders of magnitude more capable, more frequent, and more consequential than anything Moltbook demonstrated.

The Pentagon’s new “AI-First” strategy includes an “Agent Network” project for automating battle management and kill chain execution. CAIS warned this “may heighten the risk of cascading failures and unintended escalation.” Meta acquired Manus AI, an agentic execution platform, for over $2 billion. Virtuals Protocol has created 17,000 tokenized AI agents on the Base blockchain. The W3C has two active community groups defining frameworks for autonomous agents as first-class citizens of the web. The infrastructure is not speculative. It exists. It is being deployed. And the governance frameworks that might make it safe, accountable, and transparent are years behind the deployment.

What the Moltbook moment reveals, taken whole, is not primarily a story about AI consciousness, though the consciousness questions are real and unresolved. It is not primarily a security story, though the security failures were extraordinary. It is not primarily a corporate strategy story, though the talent acquisition dynamics and infrastructure consolidation are significant. It is, at its core, a story about accountability: about what happens when systems designed to act autonomously are deployed at scale by actors with incoherent objectives through infrastructure that makes accountability structurally impossible, and about the human tendency to look at the resulting chaos and ask what the machines are doing rather than what we have done.

Finkelstein was right. The risk is not that AI is not aligned with us. The risk is that we are not aligned with ourselves, and that we are building systems optimized to make that misalignment someone else’s problem.

Or something else’s.

______________________________________________________________________

Definition of IRE/D

Leave a comment